Shape Optimization for Consumer-Level Digital Fabrication

December 13th, 2017, 3:30pm, Room SP3 058

Abstract:

Traditionally, 3d modelling in computer graphics deals with the geometric and visual aspects of 3d shapes. On the other hand, due to the growing capabilities of personal digital fabrication technology and its spread into offices and homes, 3d models are increasingly entering the physical world. Therefore, the physical properties of 3d models come into focus. For example, 3d-printed models should be able to stand balanced in a desired pose without toppling over, or should posses certain properties, like a specific sound if vibrating or a minimal structural strength if exposed to external forces. It is thus desirable to have methods that allow the user to specify the intended physical properties of an object in addition to its 3d geometry, and which automatically take these properties into account when generating a specification for a 3d printer.

In this talk I will give an introduction to such computational design problems and introduce a methodology for efficient non-linear shape optimization.

About the Speaker:

Przemyslaw Musialski is university assistant and principal investigator at the TU Wien (Vienna University of Technology), Institute of Discrete Mathematics and Geometry, and member of the Center for Geometry and Computational Design (GCD) where he heads the Computational Fabrication group.

He obtained the MSc degree (Diplom-Mediensystemwissenschaftler) in 2007 from the Bauhaus University Weimar and the PhD degree (Dr.techn.) in 2010 from the TU Wien. From 2007 to 2011 he was with VRVis Research Center in Vienna, and from 2011 till 2012 he was postdoctoral scholar at the Arizona State University. Since April 2012 he is with TU Wien.

Haptic Mixed Reality - Exploring Applications in Surgical Simulation

November 29th, 2017, 10:15am, Room HF 9904

Abstract:

In haptic mixed reality, touch feedback of the real environment is merged with virtual haptic stimuli, thus altering the haptic perception of objects. In our research, we explore the feasibility of using this paradigm for medical training systems. In this talk, an overview of the technique will be provided as well as application scenarios be outlined. In earlier work, we had explored the use of such haptic augmentation in the context of tissue palpation. More recently, we focused on the modification of surgical instruments, to provide augmentation during interaction with mock-up anatomical models; specifically, a prototype system of a modified surgical bone drill has been developed. The setup is capable of providing different stiffness augmentations, representing varying bone densities, based on twisted-string actuation.

About the Speaker:

Matthias Harders studied Computer Science with focus on Medical Informatics at the University of Hildesheim, Technical University Braunschweig, and University of Houston, TX, till 1999. He completed his PhD in 2003 at ETH Zurich, where he also obtained his habilitation in 2007, in the area "Virtual Reality in Medicine". He worked at ETH until 2012, with short research visits in the USA, Japan, and Australia. Following a position as Reader at the University of Sheffield, UK, he became a full professor in 2014 at the University of Innsbruck, where he leads the Interactive Graphics and Simulation group. He is a co-founder of the IEEE Technical Committee on Haptics, the EuroHaptics Society, and the IEEE Transactions on Haptics. In 2008 he co-founded the spin-off company VirtaMed, which develops medical training systems. His current research focus is on computer haptics, virtual/augmented reality, and physically-based simulation.

Taking AR to Task: Explaining Where and How in the Real World

October 18th, 2017, 3:00pm, Room SP3 0063

Abstract:

Researchers have been actively exploring Augmented Reality (AR) and Virtual Reality (VR) for a half century, first in the lab and later in the streets. Over the past few years, however, VR head-worn display developer kits have metamorphosed into early consumer products that are far superior to what most VR researchers previously had available. And compelling AR head-worn display developer kits have now been released that promise to beget everyday see-through eyewear.

What can the upcoming generation of consumer AR make possible by interactively integrating virtual media with our experience of the physical world? I will try to answer this question in part by presenting some of the research being done by Columbia’s Computer Graphics and User Interfaces Lab to explore how we can support users in performing skilled tasks. Examples I will discuss range from providing standalone assistance, to enabling collaboration between a remote expert and a local user. I will address infrastructure spanning the gamut from lightweight, monoscopic eyewear, to hybrid user interfaces that synergistically combine tracked, stereoscopic, see-through head-worn displays with other displays and interaction devices.

About the Speaker:

Steve Feiner is a Professor of Computer Science at Columbia University, where he directs the Computer Graphics and User Interfaces Lab, and codirects the Columbia Vision and Graphics Center. His lab has been doing VR, AR and wearable research for over 25 years, designing and evaluating novel 3D interaction and visualization techniques, creating the first outdoor mobile AR system using a see-through head-worn display, and pioneering experimental applications of AR to fields such as tourism, journalism, maintenance, and construction. Steve received an A.B. in Music and a Ph.D. in Computer Science, both from Brown University. He is coauthor of Computer Graphics: Principles and Practice, received the IEEE VGTC Virtual Reality Career Award, and was elected to the CHI Academy. Together with his students, he has won the ACM UIST Lasting Impact Award, the ISWC Early Innovator Award, and best paper awards at ACM UIST, ACM CHI, ACM VRST, IEEE ISMAR, and IEEE 3DUI. Steve is lead advisor to Meta, the AR company.

Adaptive GPU Scheduling for Efficient Numerical Computing and Computer Graphics

June 21st, 2017, 1:45pm, Room HS14

Abstract:

In this talk, I will discuss our efforts towards dynamic GPU scheduling, which allow a larger class of algorithms to run efficiently on the GPU. Applying these ideas to well-known algorithms, we push the performance beyond state-of-the-art approaches in such contested fields like sparse matrix-vector multiplication while allowing for more flexibility. Applying these ideas to procedural geometry generation, we propose the fastest approach for generating buildings and large cities directly on the GPU. Analyzing the scheduling behavior, we present an autotuner framework that finds an optimal schedule for the execution of a specific procedural generator for a specific GPU.

About the Speaker:

Markus Steinberger is an Assistant Professor at Graz University of Technology, Austria, leading the GPU Scheduling and Visualization Group. He has an MSc and a PhD in Computer Science from Graz University of Technology, Austria. Finishing his PhD, he received the highest possible honor for achievement in Austria, the promotion sub auspiciis prasidentis rei publicae. After his PhD he was with the mobile computer vision research group at NVIDIA, California, before leading his own group on GPU Scheduling and parallel computing at Graz University of Technology (2014-2015) and at the Max-Planck-Center for Visual Computing and Communication in Saarbrücken (2015-2017). In 2014 he became the first Austrian to win the GI Dissertation Prize. He has also won the OCG Heinz Zemanek Prize in 2016. His wide research interests are reflected by the numerous awards won by his papers, including ACM CHI, IEEE Infovis, Eurographics, ACM NPAR, EG/ACM HPG, IEEE HPEC best paper and honorable mention awards.

Computational microscopy for neural activity tracking

May 23rd, 2017, 4:00pm, Room SP3 0063

Abstract:

This talk will describe new computational methods for 3D imaging in scattering media. We demonstrate both single-shot lenslet-based imaging and detection-side aperture coding of angle (Fourier) space for capturing 4D phase-space (e.g. light field) datasets with fast acquisition times. We develop efficient 3D reconstruction methods to achieve real-time 3D neural activity tracking with high resolution across a large volume.

About the Speaker:

Laura Waller is the Ted Van Duzer Endowed Associate Professor of Electrical Engineering and Computer Sciences at UC Berkeley, a Senior Fellow at the Berkeley Institute of Data Science, and affiliate in Bioengineering and Applied Sciences & Technology. She received B.S., M.Eng. and Ph.D. degrees from the Massachusetts Institute of Technology (MIT) in 2004, 2005 and 2010, and was a Postdoctoral Researcher and Lecturer of Physics at Princeton University from 2010-2012. She is recipient of the Moore Foundation Data-Driven Investigator Award, Bakar Fellowship, Carol D. Soc Distinguished Graduate Mentoring Award, Agilent Early Career Profeessor Award Finalist, NSF CAREER Award, Chan-Zuckerberg Biohub Investigator Award and Packard Fellowship for Science and Engineering.

Beyond Fun and Games: VR as a Tool of the Trade

April 26th, 2017, 3:30pm, Room SP3 0063

Abstract:

The recent resurgence of VR is exciting and encouraging because the technology is at a point that it soon will be available for a very large audience in the consumer market. However, it has also been a little bit disappointing to see that VR technology is mostly being portrayed as the ultimate gaming environment and the new way to experience movies. VR is much more than that, there has been a wide number or groups around the world using VR for the past twenty years in engineering, design, training, medical treatments and many other areas beyond gaming and entertainment that seem to have been forgotten in the public perception. Furthermore, VR technology is also much more than goggles, there are many ways to build devices and systems to immerse users in virtual environments. And finally, there are also a lot of challenges in aspects related to creating engaging, effective, and safe VR applications. This talk will present our experiences in developing VR technology, creating applications in many industry fields, exploring the effect of VR exposure to users, and experimenting with different immersive interaction models. The talk will provide a much wider perspective on what VR is, its benefits and limitations, and how it has the potential to become a key technology to improve many aspects of human life.

About the Speaker:

Carolina Cruz-Neira is a pioneer in the areas of virtual reality and interactive visualization, having created and deployed a variety of technologies that have become standard tools in industry, government and academia. She is known world-wide for being the creator of the CAVE virtual reality system, which was her PhD work, and for VR Juggler, an open source VR application development environment. Her work with advanced technologies is driven by simplicity, applicability, and providing value to a wide range of disciplines and businesses. This drive makes her work highly multi-disciplinary and collaborative, having receiving multi-million dollar awards from the National Science Foundation, the Army Research Lab, the Department of Energy, Deere and Company, and others. She has dedicated a part of her career to transfer research results in virtual reality into daily use in industry and research organizations and to lead entrepreneurial initiatives to commercialize results of her VR research. She is also recognized for having founded and led very successful virtual reality research centers, like the Virtual Reality Applications Center at Iowa State University, the Louisiana Immersive Technologies Enterprise and now the Emerging Analytics Center. She serves in many international technology boards, government technology advisory committees, and outside the lab, she enjoys extrapolating her technology research with the arts and the humanities through forward-looking public performances and installations. She has been named by BusinessWeek magazine as a “rising research star” in the next generation of computer science pioneers, has been inducted as an ACM Computer Pioneer, received the IEEE Virtual Reality Technical Achievement Award and the Distinguished Career Award from the International Digital Media & Arts Society among other national and international recognitions.

Visualization for Applications in Energy, Industry, and Engineering

March 15th, 2017, 2:00pm, Kepler Building, Room K 001A

Abstract:

Interactive visualization has great potential for making real-world tasks more efficient and effective. In my talk, I will focus on recurring high-level tasks along the data analysis process, ranging from data quality assessment and statistical modeling to multi-criteria decision making. Novel visualization approaches to support these tasks will be illustrated based on real-world application problems from the energy sector, industrial manufacturing, and simulation-based development of powertrain systems. All shown techniques are potentially applicable to multi- and high-dimensional data in other domains as well. I will conclude my talk with a personal reflection of lessons learned in 10 years of doing applied research in close collaboration with industry partners.

About the Speaker:

Harald Piringer is head of the Visual Analysis Group at the VRVis Research Center in Vienna, Austria. His research interests include Visual Analytics for simulation and statistical modeling with a focus on the integration in real world settings. He authored and co-authored more than 25 internationally refereed scientific publications, among them two best papers, and is a regular member of program committees for conferences like IEEE VAST and EuroVis. Harald Piringer received his Master degree and his Ph.D. sub auspiciis praesidentis from Vienna University of Technology.

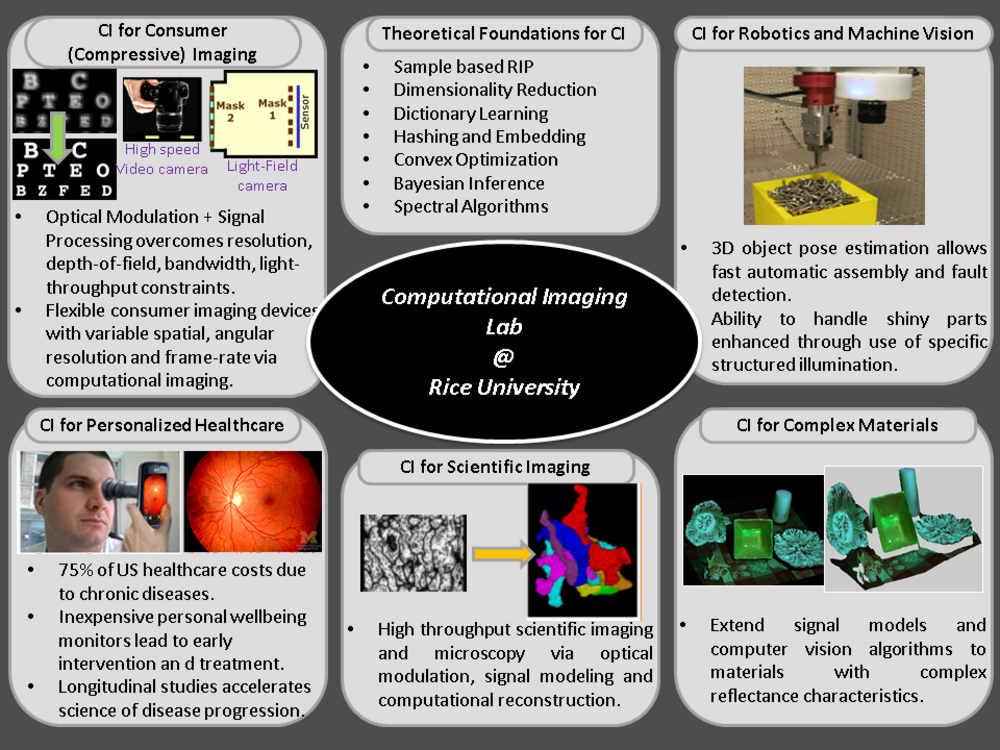

Computational Imaging: Beyond the limits imposed by lenses

January 23rd, 2017, 3:30pm, Science Park 3, Room 063

Abstract:

The lens has long been a central element of cameras, since its early use in the mid-nineteenth century by Niepce, Talbot, and Daguerre. The role of the lens, from the Daguerrotype to modern digital cameras, is to refract light to achieve a one-to-one mapping between a point in the scene and a point on the sensor. This effect enables the sensor to compute a particular two-dimensional (2D) integral of the incident 4D light-field. We propose a radical departure from this practice and the many limitations it imposes. In the talk we focus on two inter-related research projects that attempt to go beyond lens-based imaging.

First, we discuss our lab’s recent efforts to build flat, extremely thin imaging devices by replacing the lens in a conventional camera with an amplitude mask and computational reconstruction algorithms. These lensless cameras, called FlatCams can be less than a millimeter in thickness and enable applications where size, weight, thickness or cost are the driving factors. Second, we discuss high-resolution, long-distance imaging using Fourier Ptychography, where the need for a large aperture aberration corrected lens is replaced by a camera array and associated phase retrieval algorithms resulting again in order of magnitude reductions in size, weight and cost.

About the Speaker:

Ashok Veeraraghavan is currently an Assistant Professor of Electrical and Computer Engineering at Rice University, TX, USA. Before joining Rice University, he spent three wonderful and fun-filled years as a Research Scientist at Mitsubishi Electric Research Labs in Cambridge, MA. He received his Bachelors in Electrical Engineering from the Indian Institute of Technology, Madras in 2002 and M.S and PhD. degrees from the Department of Electrical and Computer Engineering at the University of Maryland, College Park in 2004 and 2008 respectively. His thesis received the Doctoral Dissertation award from the Department of Electrical and Computer Engineering at the University of Maryland. He loves playing, talking, and pretty much anything to do with the slow and boring but enthralling game of cricket. A brief description of current projects in the lab is shown below.