Project description

Acoustic Beamforming

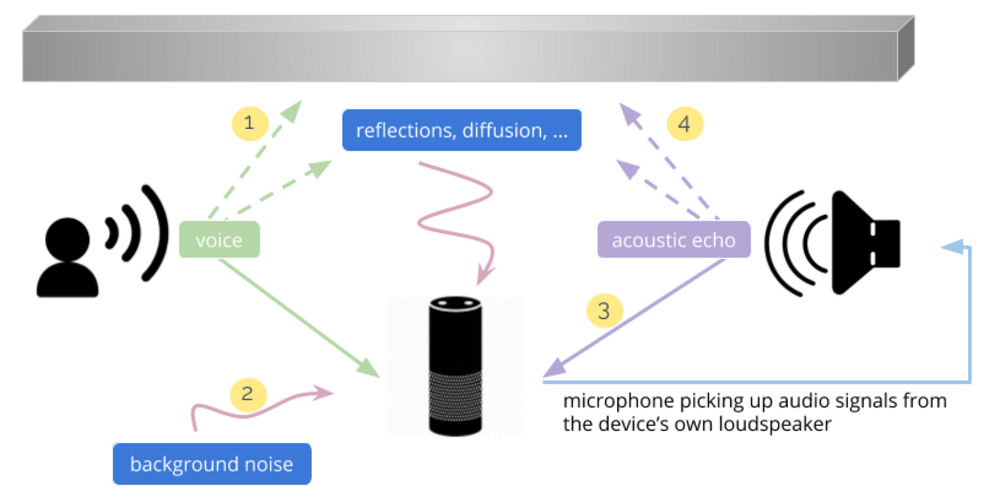

For humans, voice is the most natural way of communicating with each other. For this reason the desire of interacting with computers by voice was always present over the past 50 years. The performance capability of modern computers combined with recent advances in the development of artificial intelligence made it possible for devices to perceive voice commands. Cloud-based voice assistants, currently available on the market, are an emerging technology that enables the control of electrical household devices, gathering information from the Internet and setting up all kind of reminders. These interfaces utilize microphones that capture the spoken commands and transfer them into the cloud for further processing. As it can be seen in the picture on the next page, in a real environment there are many challenges to be overcome to receive a clean voice signal that can be interpreted by the device. For example, reflections (1), back ground noise (2), and acoustic echos (3,4) overlap with the desired voice signal and as a consequence degrade the word recognition rate.

Ph.D. Project Facts

ISP Research Team

Andreas Gaich

Eugen Pfann

Mario Huemer

Partners

Infineon Villach, opens an external URL in a new window

Infineon Munich, opens an external URL in a new window

Duration

Nov. 2015 - Oct. 2019

Therefore microphone arrays can be used to enhance the speech quality and suppress ambient noise. Microphone arrays utilize the spatial information of the sound sources and focus on the direction of the desired source while suppressing sources from other directions. This is called beamforming.

In this Ph.D. project we develop model based as well as deep learning based beamforming algorithms for voice assistance applications and investigate the influence of microphone and array imperfections, such as microphone self noise, complex frequency response mismatch between microphones in the array, and microphone displacement, on the performance of these algorithms.

Acoustic Event Detection

Sound capturing devices become increasingly ubiquitous in different kind of environments ranging from homes and offices to car interiors and on mobile phones. Combining ease of installation and decreasing costs the main purpose of these devices is typically the recording and processing of speech. However, at the same time these devices can be utilized to scan the environment in order to detect events which have a particular acoustic signature. For example, this could be a home appliance break down, a water leak, an intrusion scenario or a person or object falling to the ground.

Machine learning algorithms are applied in this project for acoustic event detection. A main focus lies on an increase of algorithm reliability, as depending on the type of event a false alarm can have similar negative consequences as a non-detected event.

Publications

Gaich A., Huemer M.: "Influence of MEMS Microphone Imperfections on the Performance of First-Order Adaptive Differential Microphone Arrays," in Computer Aided Systems Theory - EUROCAST 2017, Serie Lecture Notes in Computer Science, Vol. 10672, Springer International Publishing, Cham, Seite(n) 170-178, 2018